4

Change classes (persistent / moved / removed / new)

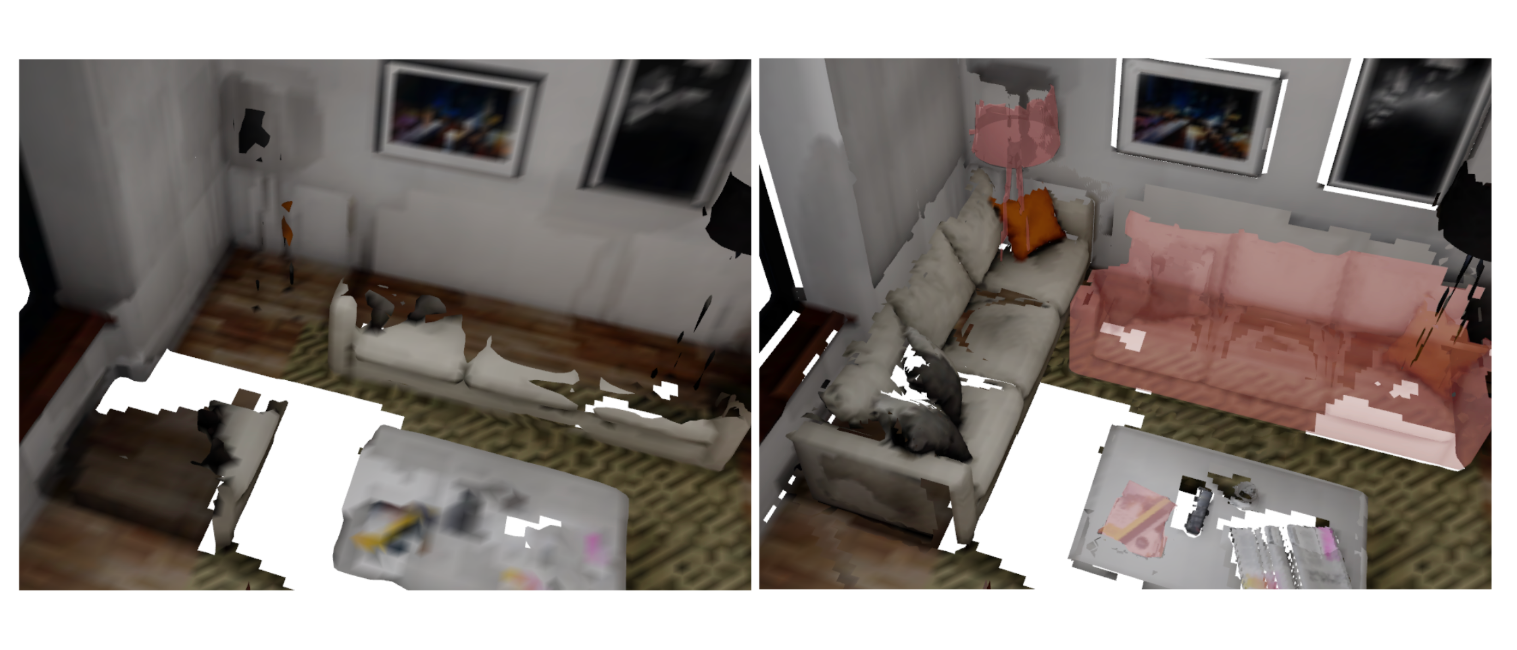

Object-level multi-session change detection on Meta Quest: capture a room, revisit it, and identify which objects moved, vanished, or appeared.

ETH Zürich, Mixed Reality course · 2025

MR headsets rely on a spatial map to anchor virtual content, but today's systems implicitly assume the room is static. Indoor environments evolve, and scene-level meshes or global occupancy maps don't separate one object from another, so they can't reason about what actually changed. The question we tackled: can we detect changes at the object level, not at pixel or mesh level, and visualise them inside the headset?

The pipeline captures multiple Quest sessions, then runs panoptic segmentation and a state-of-the-art monocular depth model on the recordings. The Panoptic Multi-TSDFs mapper (Schmid et al. 2022) builds an independent TSDF submap per object. Submaps are matched across sessions by centroid distance and volumetric overlap, classified as persistent, moved, removed, or new, and rendered back onto the mesh with colour-coded overlays and a temporal slider.

A Unity app on the headset records the synchronised inputs the mapper needs, plus a Python pipeline that fixes a few real-world capture issues.

Builds an independent volumetric submap per object, then matches submaps across sessions.

In-headset interface that renders the categorisation directly on the reconstructed mesh.

Unity on the headset, Python preprocessing offline, a panoptic TSDF mapper as the geometric backend, and the mixed-reality overlays back in Unity.

Key technical decisions

4

Change classes (persistent / moved / removed / new)

~20 cm

Depth inconsistency removed by swapping raw Quest depth for UniDepthV2

Object-level

Per-object TSDF submaps, independently updateable across sessions

Cross-session matching

Centroid distance plus volumetric overlap on per-object submaps is enough to classify all four change states without a learned matcher.