HILTI: Construction-Specific Instance Segmentation

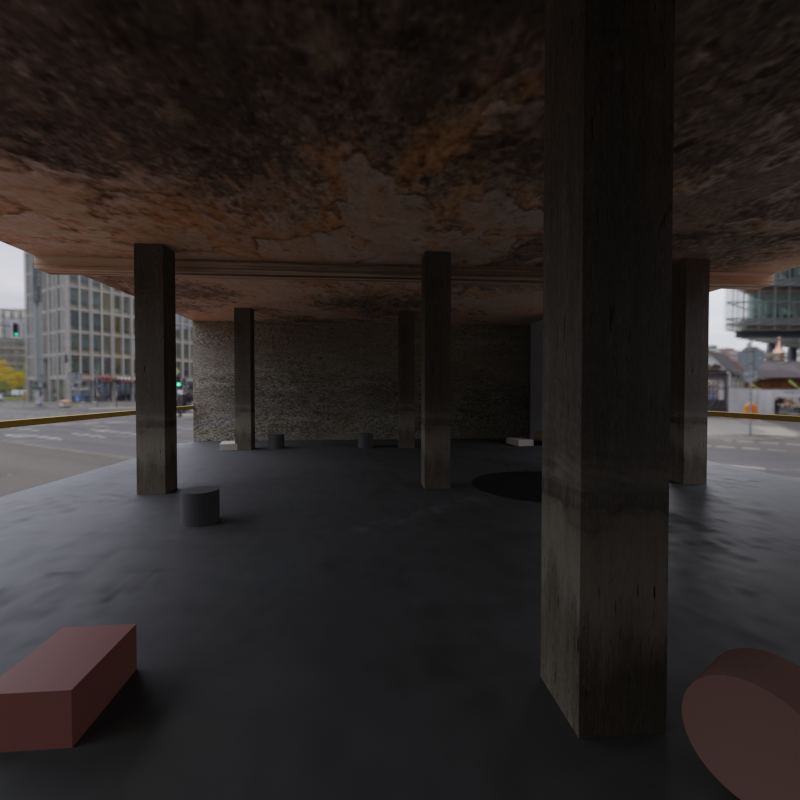

Instance segmentation of architectural components in 360° equirectangular video from active construction sites.

ETH Zürich, HILTI collaboration · In progress · 2026

Stack

- Python

- PyTorch

- Hugging Face

- Blender

Role

ETH project

Team

4 people

Status · Work in progress

Active research project. The Blender synthetic-data pipeline, ablation results across YOLO / Mask2Former / Roboflow, and final architecture decisions will be added as the work progresses and metrics come in.

Overview

Construction sites are filmed with 360° cameras for progress tracking and quality control. The footage comes out as equirectangular video, and the job is to detect six architectural components per frame (columns, staircases, doors, door frames, elevator shafts, outer windows) with per-instance masks. Two things make this hard. The projection distorts non-uniformly with latitude and wraps at the seam, so any object crossing it appears as two fragments at opposite image edges. And only a few hundred labelled frames exist, with construction interiors that look nothing like COCO.

The pipeline tackles both. Re-projecting the panoramic frames into perspective views lets COCO-pretrained backbones do most of the work, and a Blender-based rendering pipeline manufactures labelled frames to make up for the data gap.

What is being built

1. Orthogonal projection pipeline

Re-projects each panoramic frame into multiple perspective views, runs segmentation in those views, and merges predictions back into the panorama.

- COCO/ADE20K-pretrained backbones run in their native perspective domain. No spherical convolutions, no architectural surgery.

- Overlapping tangent views handle seam-crossing instances; per-view masks are merged via IoU-based deduplication.

- Latitude-dependent distortion stays small inside each view because the views are kept close to the equator before projection.

2. Architecture ablations

YOLO, Mask2Former, and Roboflow fine-tuned on the construction dataset to bracket the design space: fast/simple, slow/strong, and off-the-shelf.

- YOLO gives the quickest inference and built-in mosaic-style augmentation, but expects a square input and aggressively downscales the panoramic frames.

- Mask2Former has architectural classes in its ADE20K pretraining that overlap with the target taxonomy, giving better mask quality at the cost of inference speed.

- Roboflow is used mostly for auto-labelling and rapid iteration on the small dataset.

3. Synthetic data via Blender

A few hundred labelled frames isn't enough for production-grade training, so we're building a procedural Blender pipeline that manufactures them.

- Generates synthetic construction interiors with parameterised columns, doors, windows, staircases, and elevator shafts.

- Renders straight to the same equirectangular format as the real camera, with ground-truth instance masks per frame.

- Plan: bootstrap training on the synthetic frames, then fine-tune on real labels.

Technical details

The pipeline converts equirectangular input into perspective views, runs an off-the-shelf segmentation backbone, and merges predictions back into the panoramic frame.

- Input: 360° equirectangular video at roughly one frame per second

- Output: per-instance masks with class labels and unique IDs

- Target classes: column, staircase, door, door frame, elevator shaft, outer window

- Models compared: YOLO (single-stage), Mask2Former (query-based transformer), Roboflow (off-the-shelf)

- Synthetic data: procedural Blender scenes rendered to equirectangular with per-instance masks

Key technical decisions

- Orthogonal projection over spherical CNNs: Re-projecting to perspective lets us reuse COCO/ADE20K pretrained checkpoints. Spherical convolutions are more principled but need custom operators and lose most of the pretraining benefit.

- Multi-architecture ablation: YOLO, Mask2Former, and Roboflow span fast/simple, slow/strong, and off-the-shelf. Different bias/variance tradeoffs help isolate what's data-limited versus model-limited on a small dataset.

- Blender for synthetic data: Real construction-site labelling is expensive and slow. A procedural pipeline gives unlimited annotated frames with full control over class balance.

Challenges & tradeoffs

- Equirectangular distortion: Standard CNNs assume a uniform pixel grid. ERP stretches non-uniformly with latitude, wraps at the seam, and has a 2:1 aspect ratio, none of which line up with square pretrained inputs.

- Data scarcity: A few hundred labelled frames, with severe class imbalance: columns appear in almost every frame, elevator shafts are rare.

- Synthetic-to-real domain gap: Blender renders give us unlimited labels but don't perfectly match real construction lighting, materials, and clutter. Closing the gap is the open research question.

What I learned

- Re-projection beats spherical convolutions when COCO/ADE20K pretraining is on the table. Pretraining transfer outweighs the geometric purity of operating directly on the sphere.

- Bracketing model strength (YOLO vs Mask2Former vs Roboflow) is what tells you whether the bottleneck is data or model on a small dataset.

- Synthetic-data pipelines are systems problems, not rendering ones. Reproducibility and label-format parity matter more than render fidelity.

- Rare classes (elevator shafts) need explicit rebalancing in the synthetic pipeline; resampling the real frames alone doesn't fix it.