2.90 m

MAE

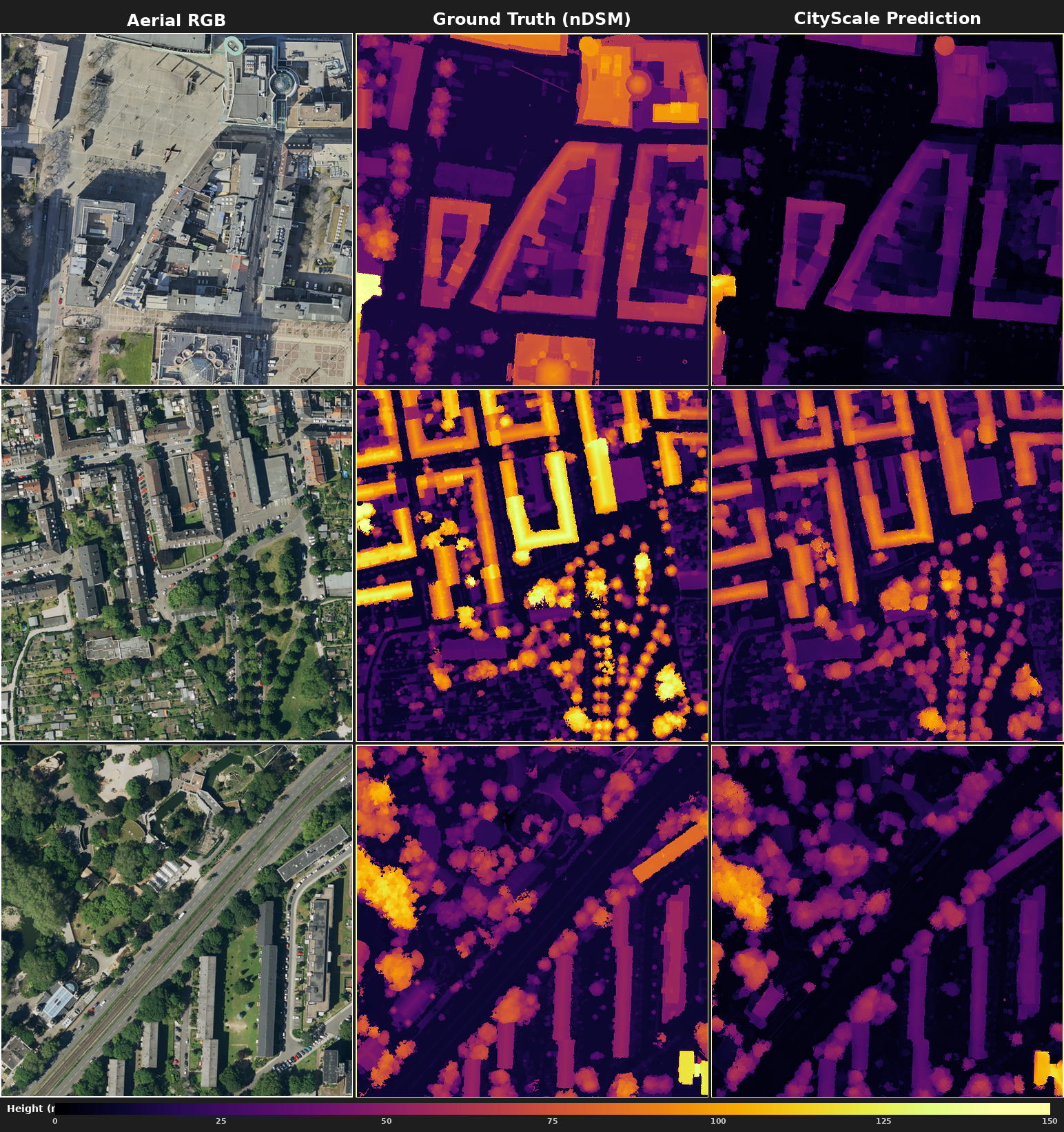

Estimate absolute building heights from a single aerial RGB image, using latent diffusion with learned scale heads.

ETH Zürich, Photogrammetry & Remote Sensing Lab · Sep 2025 – Present

Stack

Role

Research contribution

Team

1 person

Building-height maps (nDSMs) drive urban planning, flood risk, and climate-adapted architecture, but today they come from expensive LiDAR or multi-view stereo campaigns. National mapping agencies already capture single-view aerial imagery routinely, so a model that reads metric heights off one photo would cut city-scale 3D reconstruction by an order of magnitude. The catch is that one overhead photo carries no scale. Two rooftops can look identical and differ in height by an order of magnitude, and diffusion-based depth models like Marigold only give you relative depth, needing a calibration pass against ground-truth elevation maps to recover real metres.

CityScale adds learned scale heads on top of Marigold and trains them jointly with the backbone. Two losses run side by side: the diffusion denoising objective preserves the depth prior, and a pixel-level metric-height regression supplies absolute scale. The result is metre-valued elevation maps from one forward pass, no calibration step.

Marigold's frozen VAE and denoising U-Net give relative depth. The scale heads turn it into metric heights.

A 23-run sweep across head architectures, loss weights, and learning rates.

Tested on six European cities, then started looking at whether synthetic tall-building data could lift the height-clipping ceiling that limits Frankfurt-style skylines.

Marigold's latent-diffusion depth backbone fine-tuned end-to-end alongside a small learned scale head.

Key technical decisions

2.90 m

MAE

5.83 m

RMSE

+0.06 m

Median bias

Generalisation

Transfers to Switzerland, France, and Germany without retraining.

This was my first hands-on experience training large-scale models end-to-end. I designed and iterated on my own scale-head architectures from scratch, and it was also my first time running on HPC, with multi-GPU sweeps on ETH's Euler cluster. The most useful lesson, in retrospect, is to integrate well-tested SOTA code where it exists instead of reimplementing components yourself. Published implementations have already absorbed the small bugs and edge cases that bite you when you roll your own.